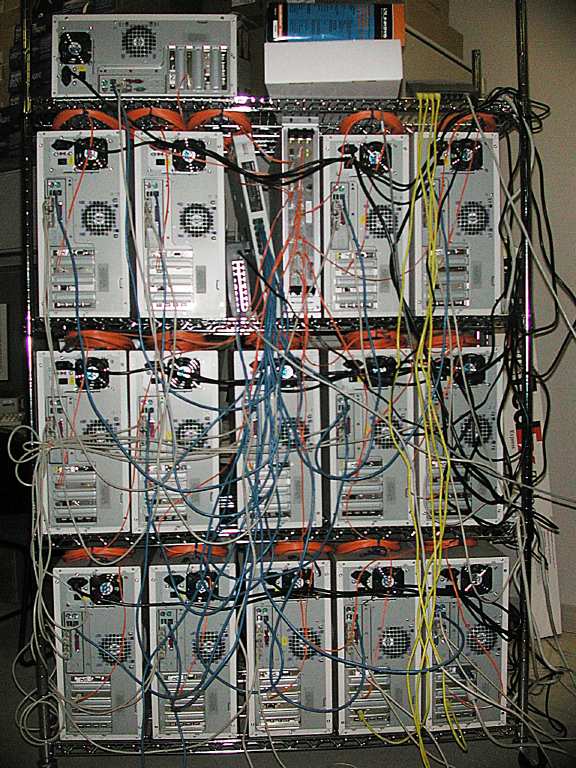

- 16 + 1 spare Tyan S2466N

- with dual Athlon XP 1900 (MP ready),

64KB L1 I+D split caches, 2-way associative, 64B/line

256KB L2 shared cache, 16-way associative, 64B/line - on-board 3c905 FastEther,

- 512 MB RAM,

- 60 GB IBM Deskstart IDE drive,

- CD-ROM,

- 2nd 3c905 PCI FastEther (for bonding),

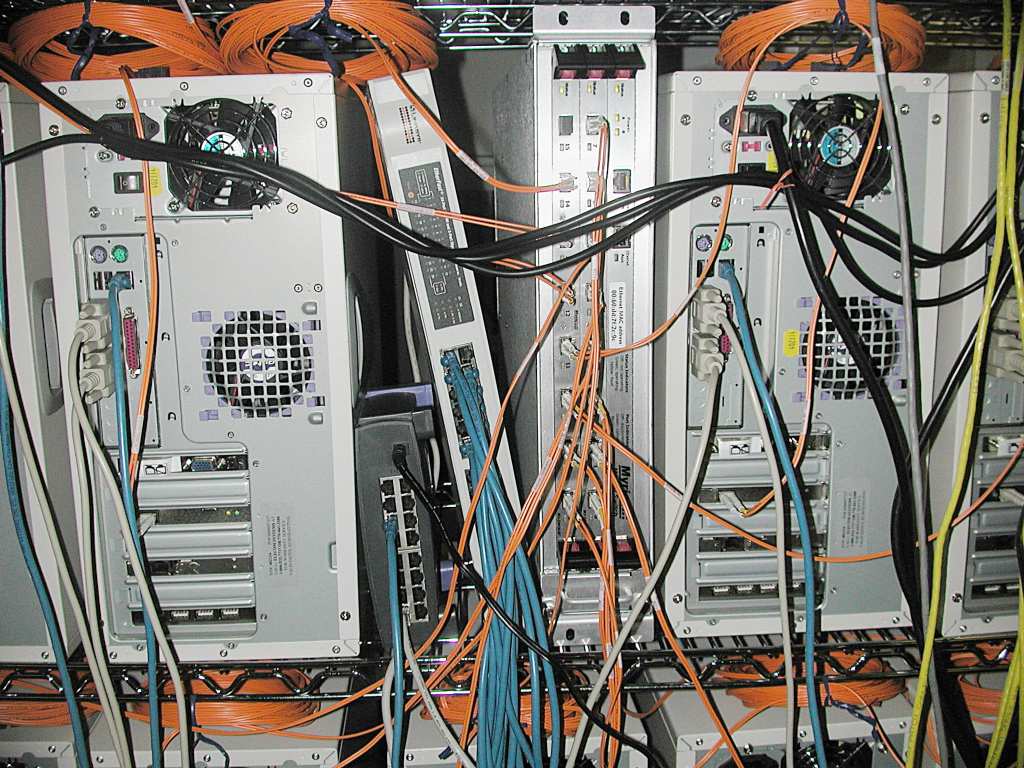

- Myrinet M3F-PCI64B-2 PCI card

- (in mini-towers, not 1U or 2U enclosures, for historic reasons)

Cluster networking:

- M3F-SW16M Myrinet 16 port switch, 24 port 3Com (see Setup Notes)

- FastEther switch, 16 port FastEther Switch (for bonding)

- FastEther switch, 24 port FastEther + 2 port GigaEther Switch (uplink)

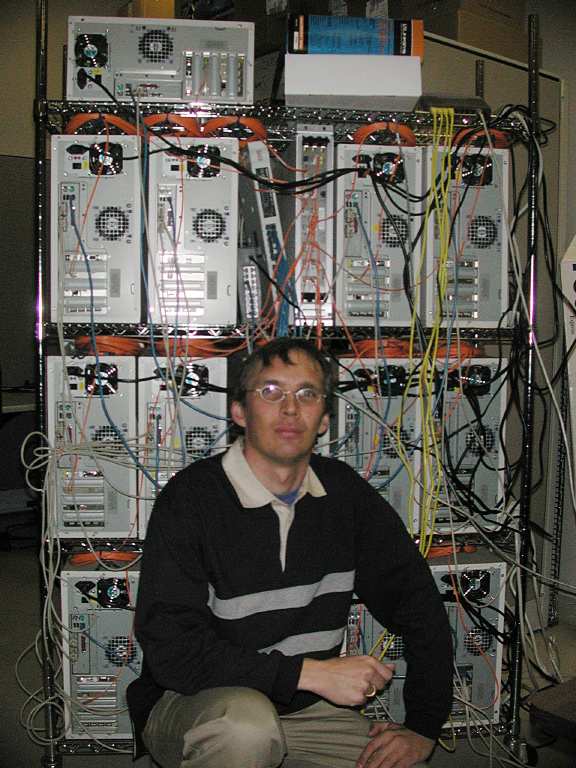

Front View of Cluster (click to enlarge)